Demystifying Model Context Protocol (MCP): AI Gets Smarter About Context¶

Ask an LLM to help you debug a Kubernetes incident. It does a decent job. Then, five minutes later, ask it a follow-up question — and watch it answer as if the previous conversation never happened.

That's not a bug in the model. That's the fundamental architectural problem with how most AI tools are wired up. Every prompt starts fresh. Decisions evaporate. Context has to be re-established from scratch every single time. At some point you stop thinking of the AI as a collaborator and start treating it like a very fast search engine that needs to be told the same things repeatedly.

Model Context Protocol (MCP) is the attempt to fix that. It's an open standard — originally proposed by Anthropic, now gaining adoption across the industry — that defines a common way for AI models and the tools around them to share, persist, and prioritise context. The goal is simple: make AI assistants behave less like stateless chatbots and more like reliable operators that actually remember what's going on.

Why context loss is such a concrete problem¶

Before getting into the protocol itself, it's worth being specific about what context loss actually costs you in practice.

In a platform engineering context, the problems show up fast. An agent that's helping with an incident can't carry forward what it already knows about the system unless you explicitly paste it in again. An assistant helping a developer scaffold a new service doesn't remember the naming conventions it just helped establish three conversations ago. A model that's been asked to review a PR can't easily access the context of the related JIRA ticket unless a human manually bridges the gap.

These aren't edge cases. They're the normal operating conditions for any team using AI tools at scale. And the workaround — manually pasting context into every prompt — doesn't scale. It's also error-prone. Context that lives in chat threads and gets copied manually is context that gets lost, truncated, or quietly outdated.

MCP is the answer to: "what if the tools handled this, rather than the humans?"

What MCP actually is¶

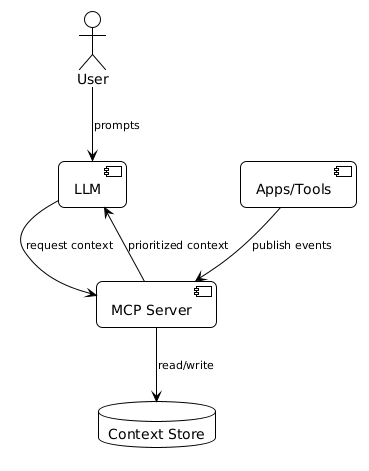

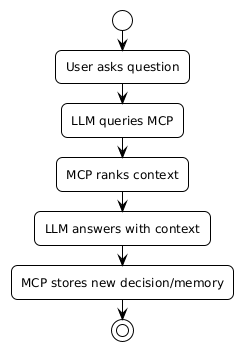

At its core, MCP is a client-server protocol. It defines how an AI model (the client) talks to external tools and data sources (the servers) in a standardised way.

Before MCP, every AI tool integration was bespoke. Your Slack bot talked to Slack one way. Your IDE assistant talked to your code repository another way. Your incident tool had its own custom integration. None of these could share context because they weren't speaking the same language.

MCP defines a common interface. An MCP server exposes capabilities — tools, resources, and prompts — in a standard format. An MCP client (which is your AI model or assistant) can discover and call those capabilities without needing custom integration code for each one. And crucially, context can flow between them in both directions.

The three things MCP handles:

Tool calls — the model can invoke actions on connected systems. Query a database, search a knowledge base, trigger a deployment, create a JIRA ticket. These are structured calls with typed inputs and outputs.

Resources — the model can read structured data from connected systems. A resource might be a file, a configuration object, a schema, or any persistent piece of information the model needs to do its job.

Prompts — pre-defined prompt templates that can be stored and reused across sessions. Not the full system prompt, but reusable building blocks.

The three properties that matter¶

MCP is built around three principles that solve the context loss problem directly.

Context persistence — context survives beyond a single prompt. When a model looks up information via an MCP server, that information can be stored and referenced again in future interactions. The context isn't trapped in the chat window.

Context sharing — tools and models can publish and consume the same context. An incident response tool and a change management tool can both read from and write to the same context store. When the incident tool records that a rollback was performed, the change management tool can see that without a human copying it across.

Context prioritisation — not all context is equally relevant at all times. MCP allows context to be tagged and structured so the right information surfaces at the right moment. You're not flooding the model's context window with everything — you're surfacing what's relevant to the current task.

What this looks like for platform teams¶

In practice, MCP changes the architecture of how you connect AI to your toolchain.

Instead of one-off integrations where each AI tool talks to each system in its own way, you build MCP servers for your key systems — your Kubernetes clusters, your incident tooling, your internal developer platform, your secret store — and any AI client that understands MCP can connect to all of them with the same interface.

Your Backstage instance becomes an MCP server. Your ArgoCD deployment data becomes an MCP resource. Your runbooks become MCP prompts. The AI assistant working with your on-call engineer can pull context from all of these without the engineer having to paste anything in manually.

The practical effect: assistants that actually know the current state of your system, can take actions against it, and carry that context forward across interactions. Less setup overhead at the start of every conversation. Fewer mistakes from stale context. Faster time from "what's wrong" to "here's what we did".

Where MCP is right now¶

MCP is an evolving standard. Anthropic published the initial spec; it's now being adopted and extended by other model providers and tool builders. The ecosystem of MCP servers is growing quickly — there are community-built servers for GitHub, Slack, Kubernetes, Jira, Confluence, and many more.

Not every AI tool supports it yet. But the direction is clear: the industry is converging on a standard for how models talk to the world. Teams that start thinking in terms of MCP architecture now — what systems would you expose as MCP servers, what context would you want to persist — are going to be ahead when the tooling catches up.

The stateless chatbot era is ending. What comes next looks a lot more like an operator that actually knows what it's working with.

The working code¶

If you're building MCP infrastructure for production — service account auth, per-caller tool scoping, rate limiting, audit logging, prompt injection detection — the companion repo has a complete gateway implementation you can wire into your own HTTP server.