GitOps as a Product: Building Self‑Service with ArgoCD¶

Every platform team reaches the same inflection point. The Kubernetes clusters are stable, GitOps is working, ArgoCD is syncing everything cleanly — and then a developer submits a ticket asking the platform team to onboard their new service. Then another. Then a queue forms.

You've built good infrastructure. But it's not a product yet. It's a service that requires the platform team to be in the loop for every new workload. That doesn't scale, and it creates exactly the kind of toil that drains platform engineers and slows down everyone else.

The fix isn't to work faster. It's to make onboarding something developers can do themselves — within guardrails the platform team controls.

What self-service GitOps actually means¶

Self-service doesn't mean developers get unrestricted access to the cluster. It means developers can get their service running without filing a ticket, as long as they stay within the boundaries the platform defines.

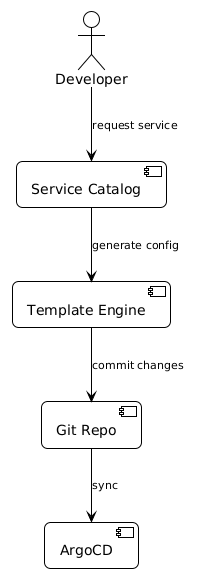

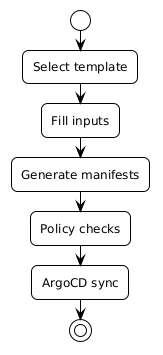

The practical model: a service catalogue where developers pick a template for their service type (API, worker, scheduled job), fill in the parameters specific to their service, and get back a complete GitOps-ready configuration that ArgoCD can sync. No custom YAML, no platform ticket, no waiting.

The platform team's job shifts from "onboard this service" to "maintain the templates and policies that make onboarding safe". That's a much better use of their time.

The three-layer model¶

The approach has three components that work together.

Service catalogue — the entry point for developers. In practice this can be a Backstage software template, a simple web form, or even a well-structured GitHub Action with inputs. The catalogue defines what parameters a developer provides: service name, team, language, environment targets, resource requests. Everything else is standardised.

ApplicationSet templates — the catalogue input feeds into an ApplicationSet template that generates the ArgoCD Application resources. Developers don't write YAML directly. The template produces consistent, policy-compliant configuration every time. The service name becomes the ArgoCD application name, the resource requests slot into pre-defined size tiers, the environments are derived from what the team is allowed to deploy to.

Policy-as-code guardrails — Kyverno or OPA policies run on the generated manifests before ArgoCD syncs them. The policy layer is where "self-service" doesn't mean "anything goes". Teams can't accidentally request a pod with no resource limits. Naming conventions are enforced. Required labels (team, cost-centre, environment) are validated. If the generated config violates policy, ArgoCD flags it before it reaches the cluster.

What the template looks like¶

An ApplicationSet with a Git generator reading team configuration files is the common starting point:

apiVersion: argoproj.io/v1alpha1

kind: ApplicationSet

metadata:

name: team-services

namespace: argocd

spec:

generators:

- git:

repoURL: https://github.com/your-org/platform-config

revision: HEAD

files:

- path: "teams/*/services/*.yaml"

template:

metadata:

name: '{{service.name}}-{{environment}}'

spec:

project: '{{team.name}}'

source:

repoURL: https://github.com/your-org/platform-config

targetRevision: HEAD

path: 'templates/{{service.type}}'

helm:

values: |

service:

name: {{service.name}}

image: {{service.image}}

replicas: {{service.replicas | default 2}}

resources:

tier: {{service.resourceTier | default "standard"}}

destination:

server: '{{cluster.server}}'

namespace: '{{team.name}}-{{environment}}'

syncPolicy:

automated:

prune: true

selfHeal: true

A developer onboards by adding a YAML file under teams/their-team/services/. That file becomes the source of truth for their service's configuration. They update it via PR. The ApplicationSet picks up the change and syncs.

Guardrails that don't get in the way¶

The policy layer is where most self-service implementations either get it right or create friction that kills adoption. The goal is policies that catch genuine problems without blocking developers who are doing the right thing.

The policies worth enforcing automatically:

Resource limits are required. Every container must have CPU and memory limits set — non-negotiable, this prevents noisy neighbour problems that affect everyone else on the cluster.

Team labels are required. team, environment, and cost-centre labels on every deployment. These power the chargebacks, the alerts, and the ownership queries.

Image registries are restricted. Images must come from your internal registry or an approved external source. No latest tags in production.

Namespace access is scoped. Each team's applications deploy to namespaces scoped to their team. Cross-namespace access requires an explicit exception.

What you don't want: policies that reject valid configurations because of minor formatting differences, or policies so strict that developers end up asking for exceptions on day one. Keep the policy list short and focused on things that actually matter for security and reliability.

The developer experience¶

When this is working well, a developer joining a new team can have their first service running in production within a day. They find the catalogue, pick the service template that matches what they're building, fill in their service name and repository URL, open a PR to the platform config repo, get it reviewed and merged. ArgoCD picks it up and syncs. Done.

No Slack message to the platform team. No ticket. No waiting.

For the platform team, every new service is consistent. Resource limits are always there. Labels are always there. The namespace is always right. The things they used to manually verify on every onboarding ticket are just... always correct.

That's the compound value. Not just that onboarding is faster for developers, but that the platform team gets confidence back. They're not approving every onboarding because they have to — they're free to focus on the harder infrastructure work because the routine stuff is handled.

The working code¶

The companion repo has a complete example with the three-layer model — service catalogue schema, ApplicationSet with matrix generators, and Kyverno validation. There's also a validate-service.py script for CI validation and a new-service.py scaffolder for generating definitions interactively.