Using AGENTS.md for Platform Engineering¶

Most platform incidents do not start with a complicated bug. They start with confusion.

One team follows one release path, another skips a check, and somebody quietly changes a naming rule without updating the docs. A few weeks later, nobody agrees on what "standard" means.

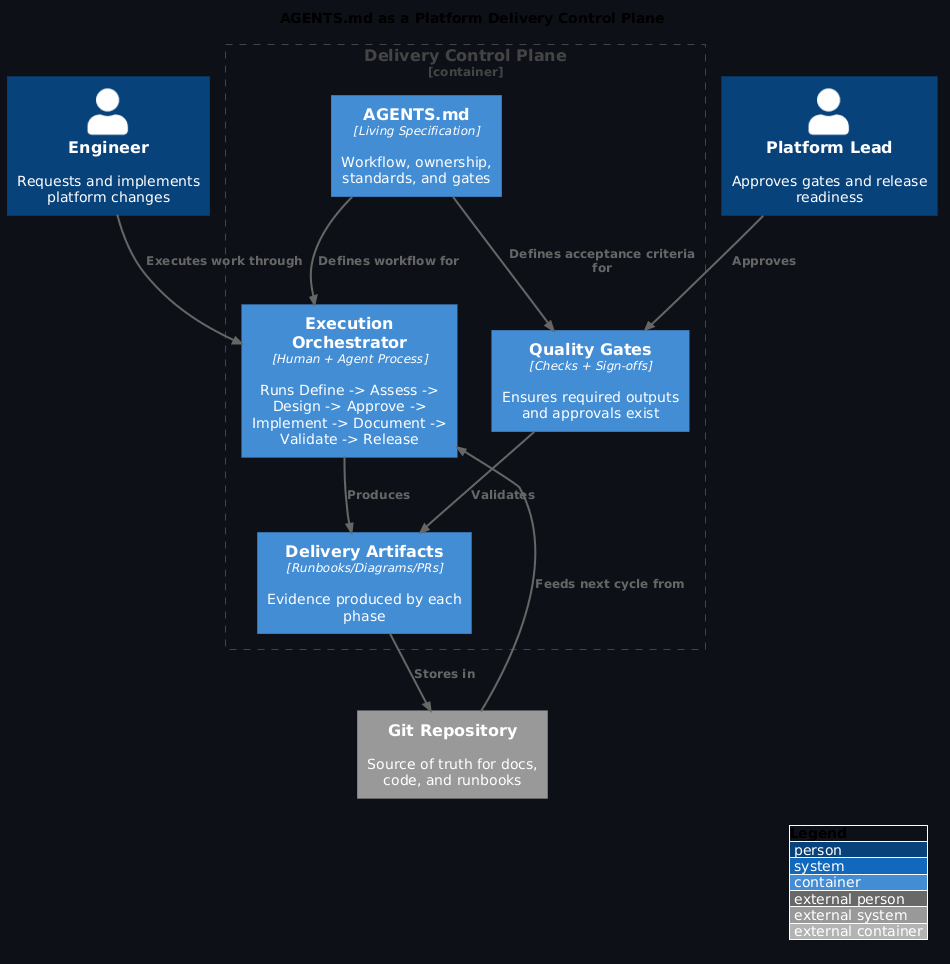

That is where AGENTS.md helps. It gives the team one shared playbook for planning, delivery, review, and release. Humans can follow it, and agents can follow it too.

The aim is straightforward: move changes from idea to production in a way that is calm, repeatable, and auditable.

Why AGENTS.md matters¶

Platform engineering gets expensive when every change follows a different path. AGENTS.md gives you a default path that people can trust.

In practice, it helps you:

- Set clear scope before work starts

- Keep delivery steps consistent across teams

- Build quality checks into the workflow

- Onboard new engineers faster

- Let agents help without losing control

What to include in AGENTS.md¶

Keep it short and specific. If it reads like policy prose, people will ignore it.

These six parts are usually enough:

- Mission and scope

- Workflow steps

- Owners (human or agent)

- Quality gates

- Standards and conventions

- Output locations (docs, repos, diagrams)

Practical workflow for platform changes¶

Use one repeatable flow for each meaningful platform change:

- Define

- Write the change goal, scope, and success criteria.

- Assess

- Capture current state, dependencies, and risks.

- Design

- Pick the approach and record trade-offs.

- Approve

- Confirm quality gates before implementation.

- Implement

- Make the change with tests and automation.

- Document

- Update runbooks, diagrams, and rollback steps.

- Validate

- Run operational checks in non-production first.

- Release

- Ship with owner sign-off and monitoring.

This sequence reduces rework because decisions are made early, before implementation starts to drift.

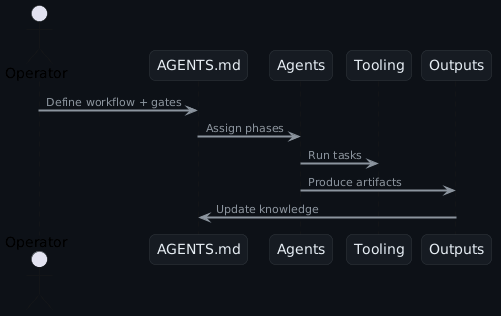

Agent collaboration flow¶

AGENTS.md enforces one rule that matters: no phase moves forward without the required output from the previous phase. That single rule prevents a lot of avoidable regressions.

A minimal AGENTS.md you can copy¶

Start with this and adapt it:

# AGENTS.md

## Mission

- Keep platform delivery consistent and low risk

## Workflow

1. Plan → define scope, success criteria, risks

2. Survey → assess current state + gaps

3. Ideate → propose options + trade-offs

4. Review → approve approach + gates

5. Build → implement + automate

6. Document → runbooks + diagrams

7. Validate → operational review

8. Ship → release readiness

## Quality gates

- Every change has an owner

- Risks documented before build

- Docs updated before release

## Standards

- Naming: <team>-<service>-<env>

- Environments: dev → staging → prod

- Tooling: Helm + ArgoCD

If this reads like a checklist, that is intentional. Checklists are easier to follow when teams are busy or under pressure.

Practical examples¶

Each example maps directly to the workflow above.

1) Platform release checklist¶

- Why: reduce release risk and keep gates consistent

- What: release plan, rollback plan, communication checklist

- How: run one fixed checklist and require sign-off at each gate

2) Incident runbook¶

- Why: improve incident response speed and clarity

- What: roles, steps, and post-mortem template

- How: trigger the runbook and record actions as structured output

3) Infrastructure bootstrap¶

- Why: create new environments without one-off setup work

- What: baseline stack (ArgoCD, secrets, policies, monitoring)

- How: define the exact bootstrap sequence in

AGENTS.md

4) SLO review¶

- Why: make reliability reviews repeatable

- What: SLO attainment, regressions, and next actions

- How: generate monthly SLO summaries from metrics

5) Sprint review deck (Marp)¶

- Why: remove manual slide preparation

- What: request volume, average handling time, top requester, top request type

- How: use agents to populate a Marp deck from metrics scripts

Companion repo¶

Walkthroughs and scripts live here: https://github.com/polarpoint-io/ai-capabilities

How OpenClaw uses it (optional)¶

In OpenClaw, AGENTS.md acts as a shared source of truth for multiple agents. It defines:

- Which agent handles each phase

- The outputs required for each phase

- The gates a lead agent must approve

This keeps multi-agent execution safe and predictable.

Common anti‑patterns¶

Avoid these mistakes:

- Too long:

AGENTS.mdshould be a few screens, not a wiki - Vague gates: "review done" is not a gate - define what done means

- No ownership: every phase needs a named owner

- Stale rules: update it when tooling or workflows change

What you get¶

- Predictable platform delivery

- Lower operational risk

- Faster onboarding

- Consistent documentation

- A workflow that scales as agent use grows

Final note¶

AGENTS.md only works when teams treat it as part of delivery, not an afterthought. Keep it current, keep it short, and make it the first thing people check before they start work.