AI governance for platform teams: Agents in production without losing control

AI governance for platform teams: Agents in production without losing control

One of the recurring tensions at KubeCon EU 2026 was this: AI agents are powerful for dramatic cost reductions and faster response to incidents, but deploying them without governance is how you end up with a $500k surprise bill or a cascade of auto-scaled clusters that don't stop.

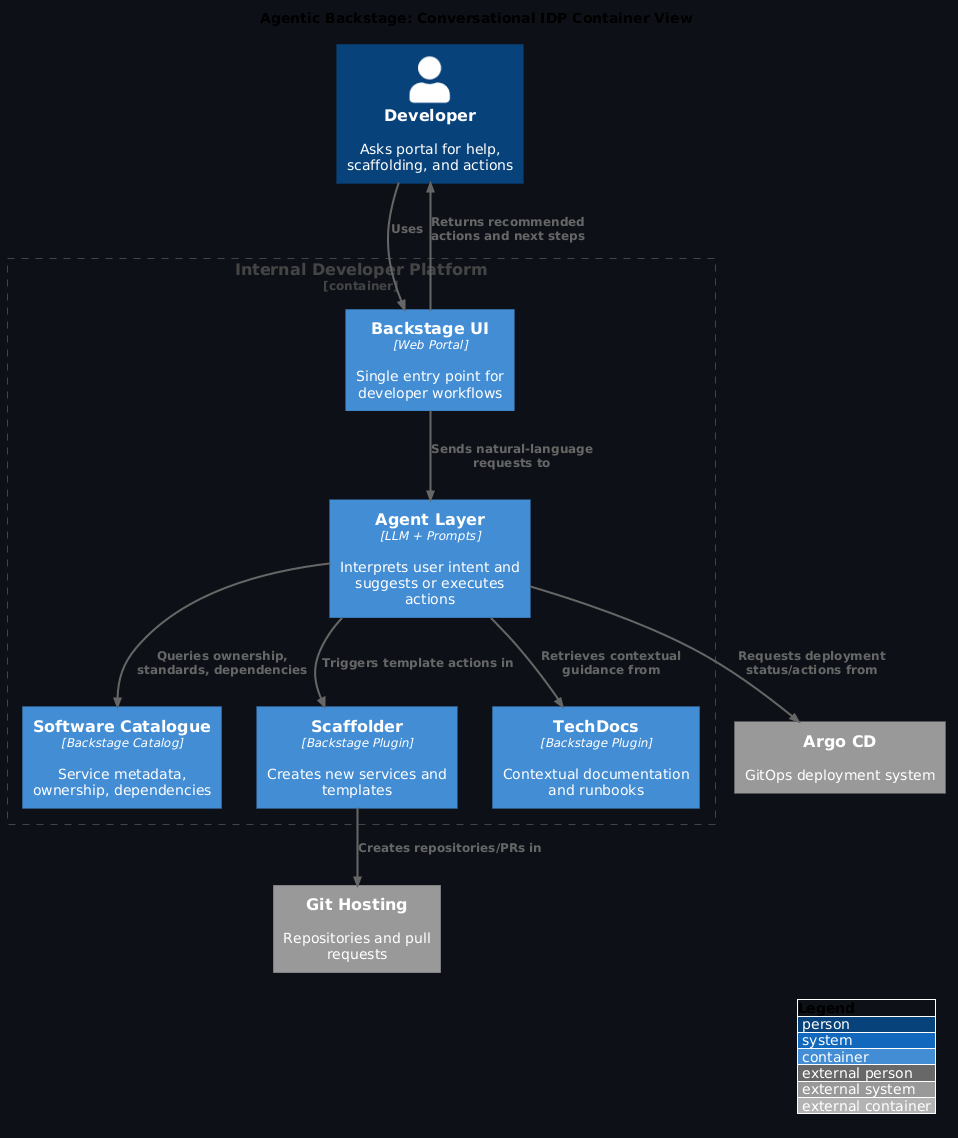

The strongest talks—especially RBI's work on moving from GitOps to AIOps and the broader keynote discussions on AI's role in platform engineering—showed a clear pattern: the question is not "should we use AI agents?" but "how do we make agents safe enough to trust with infrastructure?"

Quick takeaways

- Agents need hard boundaries: Not all decisions can be autonomous; infrastructure agents need to know exactly what decisions they can make (resource scaling, failure recovery, cost optimisation) versus what requires human review (risk-tier changes, major architectural decisions, compliance modifications)

- Observability is the foundation for trust: You cannot safely grant autonomy without visibility into exactly what an agent did, why, and what constraints it considered; this is non-negotiable for regulated environments

- Review workflows are governance: Adding a "human reviews major decisions" layer is not a bug or overhead—it's the core governance mechanism that makes AI-assisted operations work in production

- Agents should be organisationally aware: The best AI systems understand your risk model, cost allocation, and team structure; generic agents fail because they don't know what "reasonable" looks like in your context

What was getting in the way

Prior to KubeCon, organisations deploying AI agents ran into a hard set of problems:

- All-or-nothing autonomy — Either the agent is fully autonomous (risky) or fully manual (defeats the purpose). Few organisations had a clear middle ground

- Observability gaps — When an AI system makes a decision, logs often don't explain why, making it hard to detect errors or audit for compliance

- Context collapse — The AI system doesn't understand your risk model. It sees "this service needs more resources" and scales it without understanding if it's a pre-production test environment or a critical revenue system

- Governance bolted on after the fact — Organisations added approval workflows after agents were already making mistakes, leading to reactive restrictions rather than thoughtful design

RBI's approach (detailed in From GitOps to AIOps in regulated environments) solved this with a clear architecture: - AI acts as a second pair of eyes during change reviews, not as an autonomous actor - Every infrastructure change goes through a risk-aware promotion pipeline - AI provides insights (cost estimates, failure predictions, blast radius analysis) to the human reviewer, who retains final say - The system is observable: you can trace exactly what information the AI considered and what recommendation it made

This is not AI doing less work; it's AI doing different work: intelligence rather than autonomy.

The three layers of AI governance

Layer 1: Bounded decision contexts

Not all infrastructure decisions are equal. A production agent should be able to: - Scale resource requests up or down (within bounds) - Retry failed operations with exponential backoff - Diagnose routine failures (pod crashes, network timeouts, dependency issues) - Optimize resource utilisation (consolidate workloads, shed low-priority batch jobs)

But should require human review for: - Changes to risk tier or compliance classification - Shifts between infrastructure providers (AWS → Azure) - Modifications to network boundaries or security zones - Cost optimizations that require architectural change - Decisions that affect billing or quota allocations

Example boundary definition:

Tier 1 (Fully Autonomous):

- Scaling within ±50% of baseline resources

- Restarting failed services

- Adjusting monitoring thresholds

- Optimizing pod scheduling

Tier 2 (AI Recommendation + Human Review):

- Scaling beyond 50% baseline (suggests reason; human approves)

- Changing infrastructure provider (AI outlines cost/risk tradeoff; human decides)

- Modifying security group rules (AI explains the request; human validates)

- Cross-cluster workload migration (AI recommends based on cost; human decides)

Tier 3 (Fully Manual):

- Risk tier promotion (dev → staging → production)

- Compliance policy changes

- Architecture refactoring

- Disaster recovery activation

The key is that the boundaries are explicit and encoded in the system, not left to human judgment in the moment.

Layer 2: Observability as governance

The best AI governance mechanism is seeing exactly what the agent did and why.

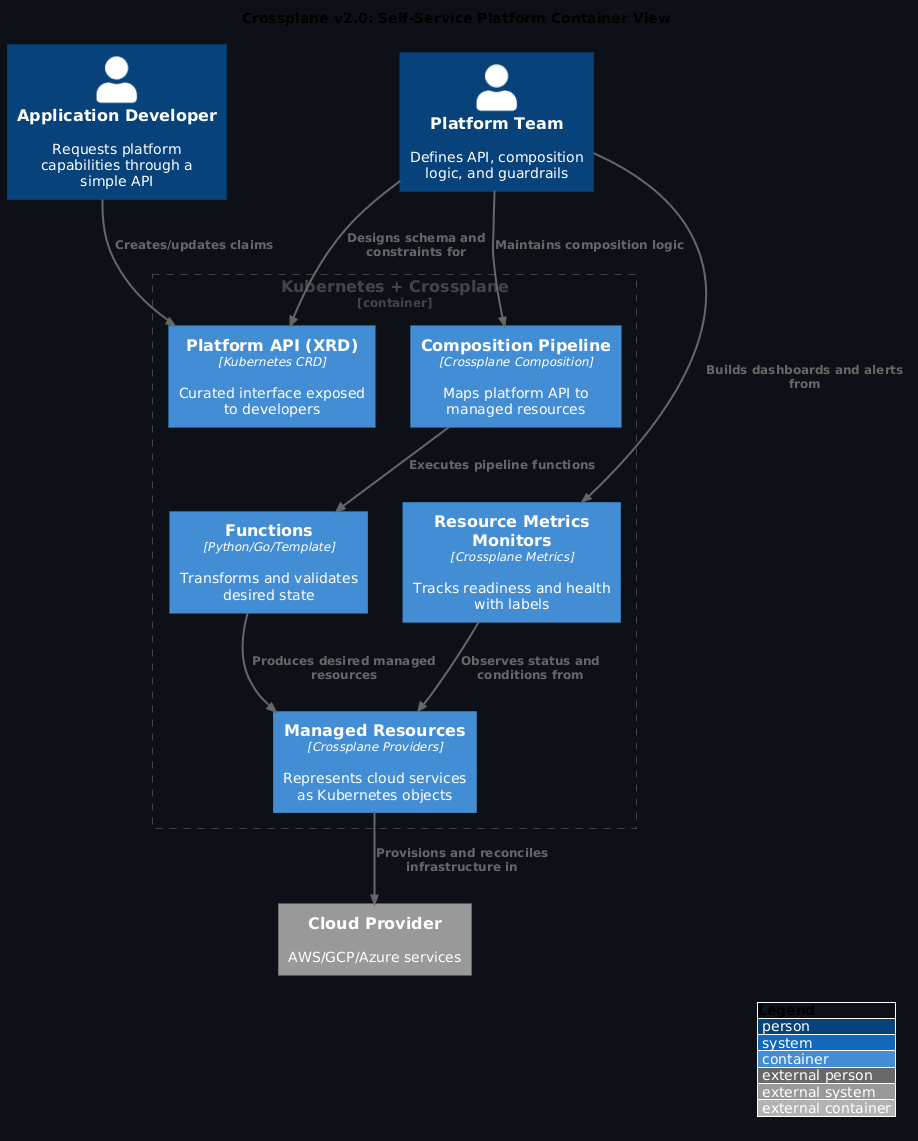

Crossplane v2 (covered separately in Crossplane v2: API-first platforms and compositional control planes) enables this with rich status conditions and event streams. When applied to AI-augmented operations, this means:

Every agent decision includes: - What it was asked to do — "Diagnose why this service is slow" - What it observed — "62% CPU, 4.2s p99 latency, 30% memory utilisation" - What constraints it knew — "This is staging, so cost optimisation takes precedence" - What it decided — "Recommend increasing CPU allocation from 500m to 1000m" - What it didn't do and why — "Did not recommend increasing memory (not the bottleneck); did not recommend autoscaling (staging use is predictable)" - Confidence level — "High confidence (prior similar cases: 47/51 correct)" - Timestamp and context — "2026-03-25 14:23:41 UTC, requested by on-call automation"

This information flow is what enables the human reviewer to: - Verify the AI reasoning is sound - Spot cases where the AI misunderstood the context - Build confidence that the system is safe to trust more autonomously - Audit for compliance or incident post-mortems

Operationally, this looks like:

# AI observation record

apiVersion: ai.platform.example.com/v1

kind: AgentDecision

metadata:

name: scale-api-service-2026-03-25-14-23-41

namespace: platform-ai

spec:

decision_type: resource_scaling

service: api-search

environment: staging

timestamp: "2026-03-25T14:23:41Z"

context:

cpu_utilisation: 62

memory_utilisation: 30

p99_latency_ms: 4200

error_rate: 0.001

cost_tier: staging-optimised

status:

action: ScalingRecommended

current_cpu_request: "500m"

recommended_cpu_request: "1000m"

confidence: 0.92

reasoning: "CPU contention correlates with latency spike; memory is not a bottleneck"

approved: true

approved_by: on-call-automation

approved_at: "2026-03-25T14:23:55Z"

executed_at: "2026-03-25T14:24:02Z"

events:

- timestamp: "2026-03-25T14:23:41Z"

type: ObservationComplete

message: "Collected metrics from 89 pods over 5 minutes"

- timestamp: "2026-03-25T14:23:50Z"

type: RecommendationGenerated

message: "Generated scaling recommendation based on CPU analysis"

- timestamp: "2026-03-25T14:23:55Z"

type: ReviewedAndApproved

message: "Approved by on-call automation (within bounded tier)"

- timestamp: "2026-03-25T14:24:02Z"

type: ExecutionComplete

message: "Updated CPU request in Deployment spec"

With this level of transparency, platform teams can: - Automatically approve routine decisions - Alert on decisions that look suspicious (high confidence but unusual reasoning) - Train the AI system (log unusual cases for model refinement) - Satisfy compliance audits (full decision trail with reasoning)

Layer 3: Organisational alignment

The AI system needs to understand your organisational context: risk models, cost models, team structure, and strategic priorities.

RBI example (regulated banking): - High-cost risk tier: "This change affects core banking systems; requires multi-layer approval" - Medium-cost risk tier: "This change is isolated to a cluster or namespace; can proceed with on-call review" - Low-cost risk tier: "This change is ephemeral (testing, dev); autonomous operation within cost limits"

Sony example (platform product): - Producer team decision: "My team owns this infrastructure; I can make scaling decisions autonomously" - Consumer team decision: "This is provided infrastructure; I can ask for scaling but not make decisions" - Cost-sensitive environment: "Optimise for utilisation first; only auto-scale if utilisation >80%"

The AI needs to know:

# Organisational context for AI

apiVersion: governance.platform.example.com/v1

kind: InsecurityModel

metadata:

name: production-ai-governance

spec:

riskTiers:

- name: production-banking

level: critical

autonomousActions: [] # No autonomous actions in banking systems

requireApproval: true

approverGroups: [security-team, on-call-lead]

maxAutoScalingFactor: 1.2 # Max 20% increase without approval

costThresholdPerHour: 500 # Alert if hourly cost would exceed $500

- name: production-services

level: high

autonomousActions:

- restart-failed-services

- scale-within-50-percent

- optimize-scheduling

requireApproval: false

autorollbackOnError: true

costThresholdPerHour: 1000

- name: staging

level: medium

autonomousActions:

- all-scaling

- all-optimization

- cost-driven-consolidation

requireApproval: false

costThresholdPerHour: 500 # Auto-stop if exceeding $500/hr

- name: development

level: low

autonomousActions: [all] # Minimal constraints; cost + safety only

costThresholdPerHour: 200 # Hard limit at $200/hr

With this context, the AI system can reason: "This is a production banking system, so I should not auto-scale it; I will generate a detailed recommendation for the on-call lead instead."

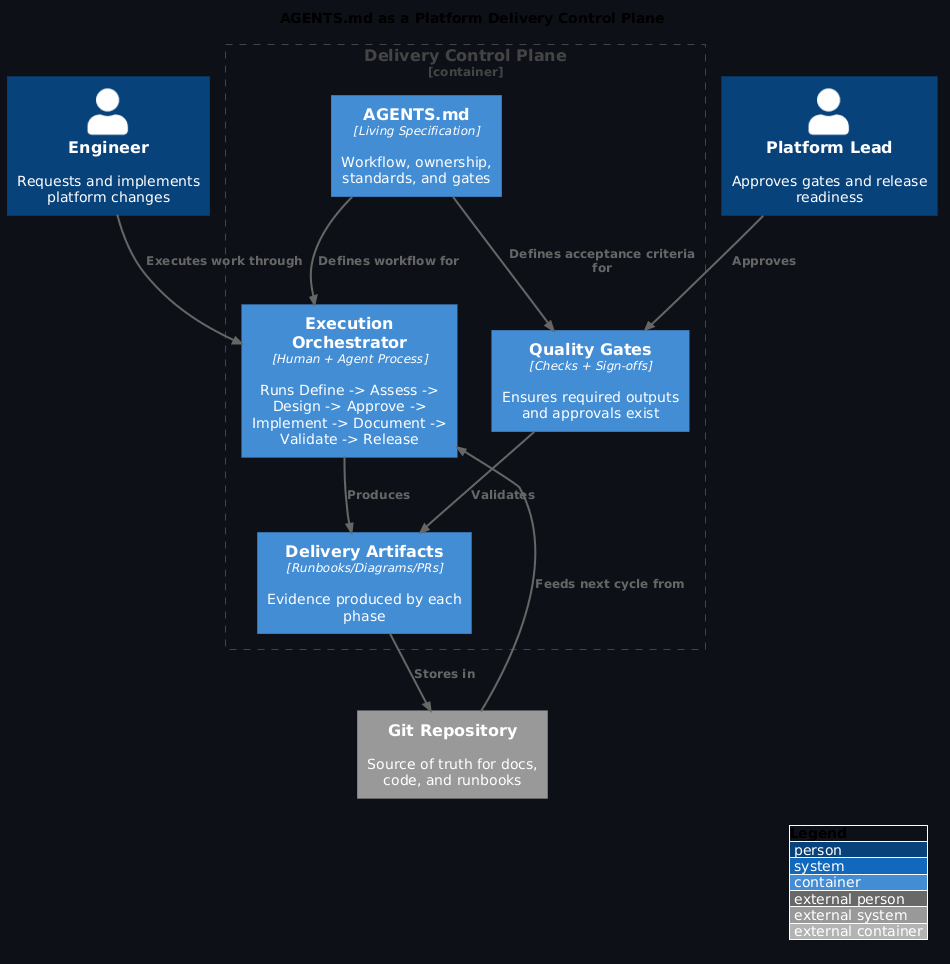

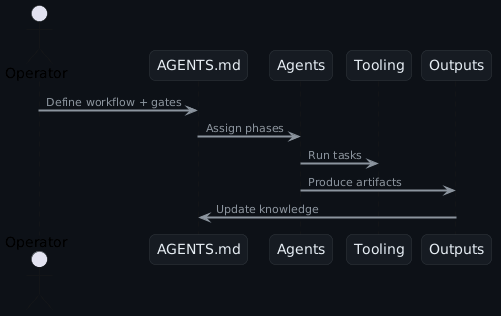

Pattern matching: AI review layers

The pattern that emerged from KubeCon talks (especially RBI) is that AI does best work when positioned as a review layer, not an execution layer.

Traditional GitOps pipeline:

AI-augmented pipeline (RBI pattern):

Code PR → CI Tests → AI Review → CD Pipeline → Deployed → Runtime AI (AIOps)

↓

[Explains risks, cost,

prior failure patterns,

dependency impacts]

In this role: - AI explains risks in human terms ("This change affects 40 services downstream; 3 have had incidents in the past month") - AI suggests rollback strategies ("If this fails, consider rolling back in this order") - AI validates constraints ("This change respects all required SLOs and cost limits") - Human approves with confidence and context

At runtime (AIOps):

Metric Spike → Runtime AI → Issue Diagnosis → Recommendation → Approval → Action

[< 100ms] [< 1s] [< 10s] [Varies] [Exec]

AI diagnoses issues at machine speed, but human approval remains the gate before execution.

Practical action items

- Define your decision tiers explicitly — What decisions are autonomous, what requires review, what is never automated? Document this as policy before deploying agents

- Implement observability first — Before granting any autonomy, ensure you can trace every agent decision. This is your audit trail and your safety mechanism

- Start with review workflows, not autonomous execution — Position AI as a recommendation engine that humans review and approve. You can expand autonomy later once trust is built

- Encode organisational context — Your AI needs to know about risk tiers, cost models, and team structure. Generic agents will fail

- Use bounded contexts for autonomous decisions — Resource scaling within 50% of baseline is safer than scaling unbounded

- Monitor AI reasoning quality — Log cases where the AI makes suggestions that are wrong. Use these to refine the system

- Set hard cost limits — Autonomous cost optimisation can go wrong fast; put caps in place (e.g., "no single decision costs more than $X")

- Build approval workflows with AI hints — The review process should be fast (< 1 minute) but thorough; AI provides context so humans can decide quickly

- Test failure modes deliberately — What happens if the AI system is wrong? Can you automatically rollback? How will you detect the problem?

- Plan for opacity and interpretability — As AI systems get more complex, some decisions will be hard to explain. Decide in advance which decisions require interpretability vs. which can be trusted based on outcomes

Scaling governance: from bounded agents to governed platforms

The picture that emerged across RBI, Sony, and the keynotes is this: you don't scale by making agents more autonomous; you scale by making governance more sophisticated.

The goal is not "fully autonomous AI systems managing infrastructure" but rather "humans and AI systems cooperating in ways that are safe, auditable, and aligned with business constraints."

RBI's approach—AI as a second pair of eyes reviewing changes—is not a compromise. It's the actual production-grade pattern: fast, safe, auditable, and aligned with how regulated environments work anyway.

This ties directly into platform teams being product teams (see Platform teams are product teams): the "product" your platform offers to application teams includes not just infrastructure APIs but also governance built into those APIs.

See also: - From GitOps to AIOps in regulated environments — RBI's specific architecture and decision patterns - Crossplane v2: API-first platforms and compositional control planes — How control planes provide the foundation for governed autonomy - KubeCon EU 2026: what actually mattered — Broader context on AI governance themes from keynotes - KubeCon EU 2026 event notes — Full conference coverage