KubeCon EU 2026: what actually mattered

KubeCon EU 2026: what actually mattered

KubeCon had no shortage of announcements, but the interesting part was not the volume. It was the convergence.

Across keynotes, maintainer updates, end-user sessions, and lightning talks, the same themes kept reappearing from different angles: platform teams acting more like product teams, AI moving into operational workflows, policy and governance becoming more automated but also more bounded, and internal platforms shifting away from ticket queues toward self-service APIs and reusable capability marketplaces.

If I strip away the noise, this is what I would actually take back to a platform team.

The biggest takeaways

1. AI moved from novelty to operations

The strongest examples were not "AI writes code" demos. They were operational workflows where AI reduced real toil.

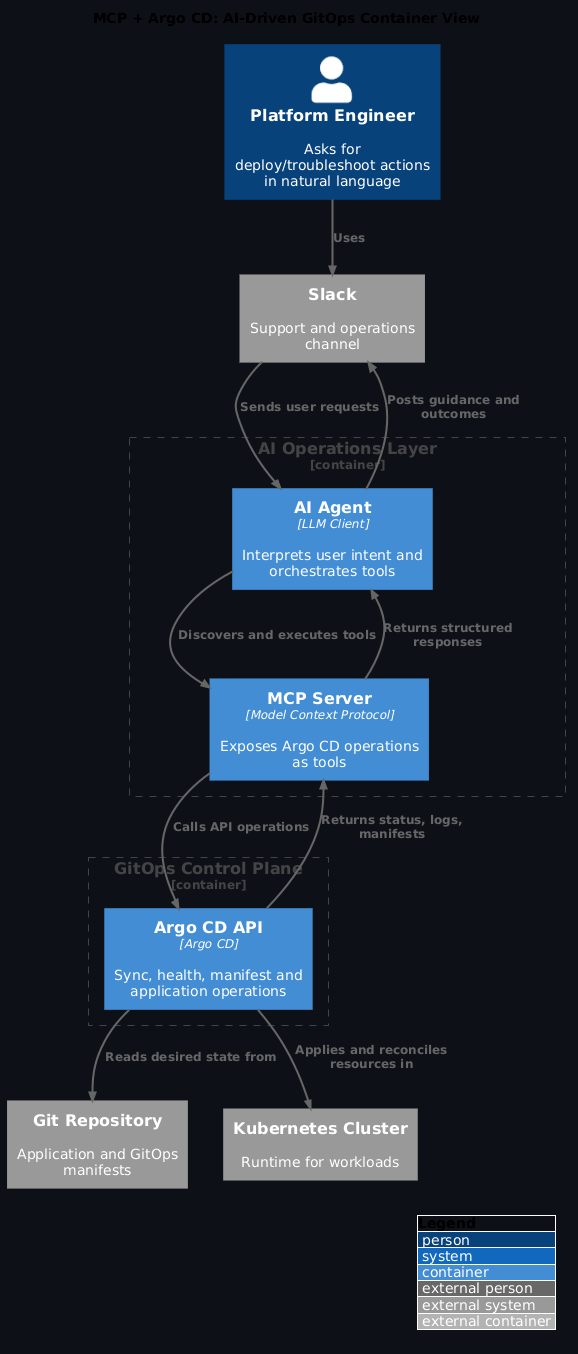

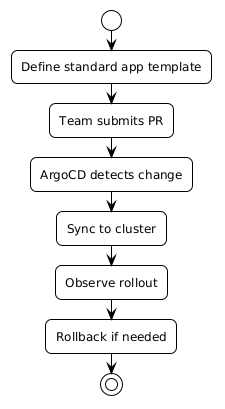

In the MCP + Argo CD talk, the standout point was this: teams got better adoption when they put AI in existing support channels, not in yet another portal tab. That sounds obvious in hindsight, but it is easy to miss when teams are excited by shiny UI work.

The same pattern showed up elsewhere too:

- Backstage is moving toward a multi-surface operating model across UI, CLI, and MCP tooling.

- Kyverno and Kagent showed policy operations becoming agent-assisted and closed-loop.

- TAG DevEx is explicitly studying AI-assisted development based on real usage data rather than hype.

- Multiple sessions treated agent experience as a real platform design concern, not just a gimmick.

If your engineers already troubleshoot in Slack, keep them there. Bring the capability to their workflow.

2. Platform APIs beat ticket queues

The Crossplane session made this painfully clear. Most teams still lose days waiting for routine infra changes because requests are encoded as tickets, not APIs.

Crossplane v2.0’s project model is a pragmatic step forward: - one project for API definitions, composition logic, and tests - local development loop that is fast enough to use daily - clearer path from platform intent to production behaviour

The big shift is cultural as much as technical: platform teams stop shipping "internal services" and start shipping products.

That same lesson was reinforced even more directly by the self-service platform session from DigitalOcean. Their core point was sharp: Kubernetes was not the bottleneck, the operating model was. Once environment creation moved from ticket queue to Backstage + Argo Events + Argo Workflows + virtual clusters + Kyverno guardrails, provisioning dropped from days to minutes.

This was one of the clearest signals of the conference: self-service is no longer a vague aspiration. It is a concrete architecture pattern.

3. Developer portals are becoming conversational and multi-surface

Backstage sessions pointed in one direction: discovery and self service are better when they feel like a conversation, not a form wizard.

That does not mean deleting structure. It means using structure behind the scenes while exposing a simpler interface to engineers.

The practical takeaway is to improve catalogue quality first. If metadata is stale, any agent layer will surface the mess faster.

The more mature version of this idea came from the Backstage maintainer update: platform teams should stop thinking in terms of a single portal UI and start thinking in terms of one platform model exposed through multiple operating surfaces. That is a much stronger design direction than just adding chat to an existing screen.

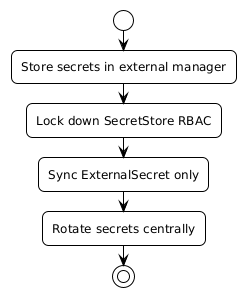

4. Governance is becoming a workflow, not just a rule set

One of the stronger late-conference themes was that policy is no longer just admission control.

Kyverno’s direction, especially in the multi-cluster governance talk, was toward full policy lifecycle management:

- validation

- mutation

- generation

- image verification

- reporting

- exemptions

- cleanup

What made the session useful was the framing around operator workflow. The real problem was not only writing policies. It was checking them, testing them, troubleshooting them, and doing that across many clusters without drowning in manual work.

That is where agentic tooling becomes genuinely useful: not replacing deterministic policy enforcement, but reducing the human overhead around it.

5. Observability is getting more contextual

The Crossplane metrics discussion landed well because it moved beyond aggregate counts.

"15 clusters unhealthy" is not enough. Teams need: - which clusters - which team owns them - how long they have been degraded - what changed around the same time

This is where label strategy and cardinality discipline matter. Better questions, not just more dashboards.

6. Platform engineering has clearly become a measurement problem

The Norwegian public-sector platform maturity talk was one of the better reality checks of the week.

Platform adoption has clearly won. Internal developer platforms and Kubernetes are widespread, and tooling is converging even without central mandates. But measurement still lags. Teams know they are building platforms; they are less clear on how to prove those platforms are successful.

That gap matters. If teams cannot define what success looks like, they cannot prioritise the right improvements, justify investment, or distinguish real self-service from internal process theater.

7. The edge story is no longer theoretical

The CERN electric glider keynote reminded everyone that cloud native tooling now runs in places where power, network and weather are all hostile constraints.

That is relevant even if you do not run aircraft projects. The same patterns apply to factories, field devices and remote operations where assumptions from datacentre life break down quickly.

The telecom keynote made the same point from another angle: cloud native is no longer confined to datacentres and web apps. It is becoming part of how large, geographically distributed, highly specialised infrastructure is operated.

Current trends that felt real

These were the trends that looked durable rather than fashionable.

1. Governed autonomy is replacing both central control and platform anarchy

The strongest sessions were not advocating fully autonomous systems. They were describing bounded autonomy:

- AI in support channels with approval gates

- action registries and standardised execution layers

- policy engines underneath intelligent orchestration

- self-service with declarative guardrails

This is a much more credible model than either “humans must approve everything manually forever” or “agents will just run the platform.”

2. Internal platforms are becoming capability marketplaces

Abby Bangser’s keynote was especially clear on this point. Platform teams cannot scale simply by adding more platform engineers. The longer-term answer is to let domain experts publish reusable platform capabilities while the platform team owns the standards, interfaces, and operating model.

That is a significant evolution from the older internal-platform-as-a-central-team model.

3. Kubernetes is expanding from container runtime substrate to broader systems operating layer

This showed up in multiple places:

- accelerated and AI workloads

- multiplayer gaming with Agones

- edge and telecom environments

- virtual clusters for developer environments

Kubernetes is no longer interesting only because it schedules containers. It is interesting because it provides a stable operational contract across increasingly different workload types.

4. The quality of metadata and interfaces is becoming a first-order platform concern

Backstage, agentic tooling, software catalog discussions, and even policy skills all pointed to the same issue: if metadata is stale, interfaces are inconsistent, or documentation is only designed for humans, the platform becomes harder to automate safely.

That is why catalog quality, policy reports, ownership labels, and machine-readable contracts kept resurfacing.

Exciting developments worth watching

These were the developments that felt most likely to compound over the next 12-18 months.

1. Backstage as a true multi-surface control plane

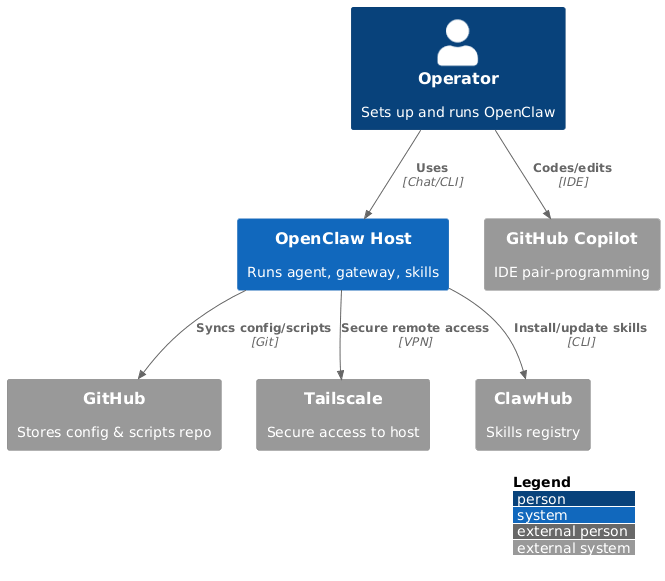

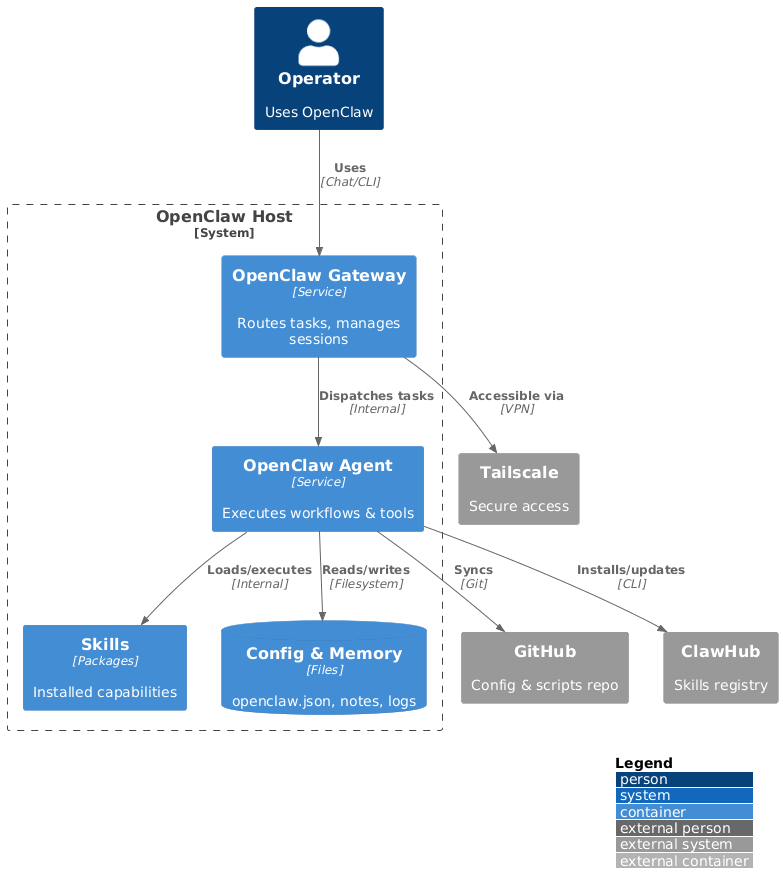

This is more interesting than “Backstage with AI.” If the action registry, catalog, permissions, CLI, and MCP surfaces keep converging, Backstage becomes a much more serious operational layer for internal platforms.

2. Closed-loop policy governance

Kyverno plus agentic orchestration is still early, but the direction is right: operators asking for reports, checks, installs, and remediation in one bounded flow rather than moving manually across ten tools.

3. Ticketless self-service environments with hard guardrails

The DigitalOcean session was one of the most practically useful examples at the conference because it showed a clear before-and-after operating model. It is easy to imagine many teams copying that architecture directly.

4. Platform engineering standards work getting more operational

TAG DevEx and the wider platform engineering community are moving from broad theory into scoped, measurable initiatives and reusable models. That is a healthier sign than another year of generic “platforms are products” slogans.

5. AI infrastructure normalising as a platform concern

The accelerator-native keynote, GPU fragmentation lightning talk, and agent-experience keynote all reinforced the same thing: AI is no longer a special side track. It is becoming another platform domain that needs scheduling, governance, observability, and usable interfaces.

What to do on Monday

If I had to boil this down into an action plan:

- Pick one high-friction operational workflow and reduce it with bounded automation rather than adding a new portal surface.

- Define one platform capability your developers request repeatedly and expose it as a productised API or template instead of a ticket.

- Tighten service catalog metadata, ownership fields, and machine-readable platform contracts before layering in more agents.

- Measure platform success explicitly: time to environment, ticket volume, adoption, failure rate, and developer satisfaction.

- Package repeated troubleshooting and governance work into reusable workflows, skills, or paved roads.

- Audit where your current platform still depends on specialist memory rather than sharable operational capability.

- Treat AI platform integration as a governance and interface design problem, not only a tooling problem.

None of this needs a big-bang rewrite. Small, boring improvements compound fast.

Where to go deeper

Platform operating models

- KubeCon EU 2026 made one thing clear: platform teams are product teams

- Platform engineering is a sociotechnical problem

- TAG DevEx in action: a practical model for reducing developer friction

AI, agents, and governance

- AI-driven GitOps with MCP and Argo CD

- AI governance for platform teams: Agents in production without losing control

- Agentic policy management: Kyverno, MCP, and closed-loop multi-cluster governance

Platform APIs and delivery systems

- Building self-service platforms with Crossplane v2.0

- Crossplane v2: API-first platforms and compositional control planes

- From GitOps to AIOps in regulated environments

Developer surfaces and control planes

- The future of IDPs: Agentic Backstage

- Backstage in 2026: one platform model, many operating surfaces

Official repos and resources

These are the primary project links that map most directly to the talks and posts above.

Platform APIs, GitOps, and self-service

- Crossplane and Crossplane docs

- Argo CD, Argo Workflows, and Argo Events

- Backstage, Backstage docs, and Backstage community resources

- vCluster docs

Policy, agents, and governed autonomy

- Kyverno and Kyverno docs

- Kagent and kMCP

- Kyverno skills reference implementation

- Model Context Protocol, the MCP specification, and the official GitHub organisation

- Dapr Agents and Dapr Agents docs

Observability, performance, and runtime operations

- OpenTelemetry docs and the OpenTelemetry website/docs repository

- automaxprocs

- PyTorch GPU memory stats reference

Broader cloud-native operating patterns

- Agones

- KubeEdge and KubeEdge docs

- CNCF TAG App Delivery and the archived TAG App Delivery working repository